How To Use Formative Assessment Data To Guide Instruction

You gave a quick exit ticket. You scanned the results. Half your class missed the mark on the concept you just taught. Now what? Knowing how to use formative assessment data is the difference between reteaching the same lesson on autopilot and making a targeted adjustment that actually moves students forward.

Most teachers collect formative data regularly, think-pair-shares, mini quizzes, thumbs up/thumbs down, journal entries. The collection part isn’t the problem. The problem is what happens next. Too often, that data ends up in a pile (physical or mental) without a clear plan for turning it into instructional decisions. And without that plan, even the best assessment strategies lose their power. It’s a gap we talk about often here at The Cautiously Optimistic Teacher, where practical classroom strategy meets realistic expectations about what teachers can actually pull off during a busy school day.

This guide walks you through a step-by-step process for interpreting formative assessment results, grouping students based on what the data reveals, and adjusting your instruction in real time. Whether you’re working with exit tickets, classroom polls, or written responses, you’ll leave with a concrete framework you can use tomorrow.

What formative assessment data is and isn’t

Before you can figure out how to use formative assessment data effectively, you need a clear picture of what it actually is. Many teachers blur the line between formative and summative data without realizing it, which leads to misreading what the data is telling them. Summative assessments, like end-of-unit tests, final projects, and report card grades, measure what students learned after instruction ends. Formative data works differently. You collect it during learning, while you still have room to change what you’re doing.

What counts as formative assessment data

Formative data is any evidence of student understanding you gather while instruction is still happening. It doesn’t need to look like a test. An exit ticket where students explain a concept in two sentences is formative data. A show of hands asking students to signal their confidence level is formative data. A quick scan of student work during independent practice, where you notice three students making the same error, is formative data. The format matters far less than the timing and intention behind it.

Formative data is not about recording a final verdict on student learning; it’s about taking a real-time snapshot of where students are so you can decide what comes next.

Here are common formative data sources you can pull from your existing classroom routines:

- Exit tickets (written responses at the end of a lesson summarizing key learning)

- Mini whiteboards or response cards students hold up simultaneously

- Think-pair-share observations where you listen in and note specific student language

- Short written responses or journal prompts tied to the day’s objective

- Classroom polls using hands, sticky notes, or digital response tools

- Anecdotal notes you jot during small group work or independent tasks

What formative assessment data is not

Formative data is not a permanent label for a student, and treating it like one is one of the most common ways teachers misuse it. A student who misses the mark on a formative check isn’t necessarily struggling in general. They might be missing one specific piece of prior knowledge, or they may have misread the question, or the concept was introduced too quickly. The data points to a specific gap at a specific moment, not to a fixed characteristic about that student’s ability.

Formative data is also not a standalone number. A class average of 58% on an exit ticket tells you something went sideways, but it doesn’t tell you what or why. You need to look at the actual student responses, the patterns across the room, and the specific errors students made to turn that number into a useful decision. When you stop at the percentage without reading the underlying evidence, you lose the most actionable part of the whole process. A score is a signal. The student work behind it is the real data.

Finally, formative data doesn’t belong in your gradebook as a scored performance metric. Using it for grading changes how students engage with it. When students know a quick check counts toward their grade, they focus on being right rather than being honest about what they understand. Keeping formative assessment separate from grades lets you collect cleaner, more accurate information about where students actually are.

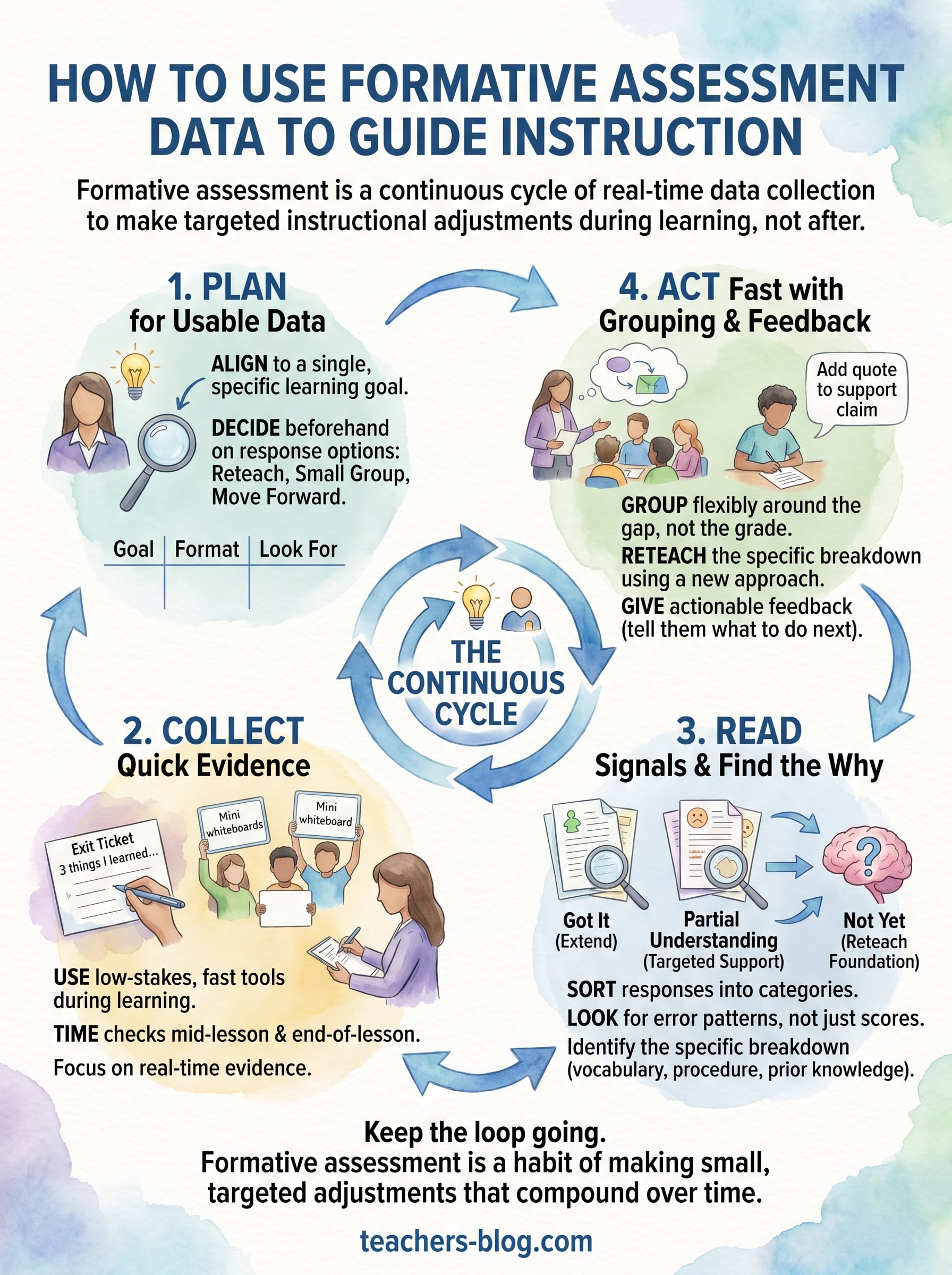

Step 1. Plan for data you can actually use

Most formative assessment problems start before the lesson does. When you design a check without deciding what you’ll do with the results, you end up with data that points nowhere. Planning your assessment around a specific purpose is the step most teachers skip, and it’s the one that makes every other step in this process actually work.

Match your assessment to a single learning goal

Before you choose your formative tool, lock in the specific learning target you’re checking. Not the whole unit, not a general sense of how students are doing, one concrete objective. For example, instead of checking "understanding of theme," check whether students can "identify two pieces of textual evidence that support a stated theme." The narrower your target, the more actionable your results will be when you look at them after class.

The more tightly your assessment connects to one skill or concept, the easier it becomes to act on what students show you.

Here’s a quick planning template to align your assessment before the lesson:

| Learning Target | Assessment Format | What I’m Looking For |

|---|---|---|

| Students can identify theme with evidence | Exit ticket (2 sentences) | Specific quote + explanation |

| Students can solve two-step equations | Mini whiteboard | Correct process, not just answer |

| Students can summarize a nonfiction passage | Journal prompt | Main idea + 2 key details |

Decide what you’ll do with each possible result

Understanding how to use formative assessment data starts with planning your response options before the lesson, not after. Ask yourself three questions: What will you do if most students got it? What will you do if about half got it? What will you do if almost nobody got it? Having a rough answer to each of these means you won’t freeze when you look at the results after the bell rings.

Your plan doesn’t need to be detailed. A simple decision rule like "if over 80% got it, move forward; if 40 to 80%, pull a small group; if under 40%, reteach to the whole class" gives you enough structure to move quickly without over-planning a lesson that hasn’t happened yet.

Step 2. Collect quick evidence during learning

Once you have a plan, you need to collect evidence without turning your lesson into a testing session. The goal is to gather usable information quickly while keeping students focused on learning. The best formative checks are low-stakes, fast, and built into what you’re already doing. You’re not adding a separate assessment layer; you’re reading the room with intention.

Choose tools that don’t slow the lesson down

The format you pick should fit the pace of your lesson. A five-question written quiz mid-class pulls students out of their learning momentum. A well-placed exit ticket, a quick poll, or a simultaneous response strategy keeps things moving while still giving you concrete evidence about where students are. The simpler the tool, the more likely you’ll actually use it consistently.

The best formative check is the one you’ll actually run when you’re thirty minutes into a lesson and realize students are confused.

Here are practical collection methods that fit naturally into a class period:

- Exit ticket (3-2-1 format): Students write 3 things they learned, 2 questions they still have, and 1 thing they want to explore further

- Mini whiteboard responses: Students write their answer and hold it up simultaneously so you can scan the room in seconds

- Sticky note sorting: Students write their response on a sticky note and place it in a "got it" or "still confused" column on their way out

- Cold call with a twist: Rather than asking one student, ask all students to write their answer first, then call on a few to share

- Observation notes during work time: You circulate with a simple class roster and mark who needs support as you scan student work

Time your checks to catch problems early

Don’t wait until the last five minutes of class to find out students missed a foundational concept twenty minutes ago. Build one quick check into the middle of your lesson and one at the end. A midpoint check might be as simple as asking students to summarize the concept so far in one sentence on a sticky note before you move to the next part of your instruction. That midpoint data is where understanding how to use formative assessment data pays off the most, because you still have time left in the period to respond.

Step 3. Read the signals and find the why

Once you have your formative data in hand, your next job is to read it systematically rather than react to it emotionally. A low score or a pile of confused exit tickets can feel discouraging, but the data itself is neutral. What matters is what you do with the patterns you find. This is where understanding how to use formative assessment data shifts from theory into real instructional decision-making.

Sort responses into categories before you count anything

Before you tally percentages or averages, physically sort student responses into groups. Three categories work well for most quick checks: students who demonstrated understanding, students who showed partial understanding, and students who missed the concept entirely. This sorting process forces you to actually read what students wrote rather than skimming for right or wrong answers.

Here’s a simple sorting framework you can apply to any written formative check:

| Category | What You See in the Work | Next Step |

|---|---|---|

| Got it | Accurate response with correct reasoning | Extend or move forward |

| Partial | Correct idea but missing evidence or logic | Targeted small group work |

| Not yet | Incorrect response or no attempt | Reteach the foundational concept |

Look for the specific error, not just the wrong answer

After sorting your responses, your goal is to identify the pattern behind the errors, not just count how many students got it wrong. Two students can give the same wrong answer for completely different reasons. One might have a gap in prior knowledge, while another misread the question or applied the right concept to the wrong step.

Ask yourself: Where exactly did the thinking break down? Was it the vocabulary? The procedure? A missing prerequisite skill? Looking for the "why" behind the error turns a vague sense that students struggled into a precise instructional target you can actually address. When you know that six students all skipped the same procedural step, you know exactly what to reteach and to whom, which makes your response far more focused than starting the lesson over from scratch.

Finding the pattern in student errors is more valuable than finding the percentage of students who got it wrong.

Step 4. Act fast with grouping, reteach, feedback

Once you know what broke down and for whom, you have everything you need to act. This is where understanding how to use formative assessment data pays off in the most visible way. Speed matters here. The longer you wait to respond, the harder it becomes to connect the adjustment back to the original learning gap. Your goal is to make a targeted move within the next class period, not at the end of the unit.

Group students around the gap, not the grade

Resist the urge to pull your "low" students into a group by default. Instead, group students around the specific error pattern you identified in Step 3. Two students who scored differently on the same check might share the exact same misconception, while two students who both got it wrong might need completely different support. Build your groups around what the data shows, not what the gradebook says.

Flexible grouping based on a specific skill gap is more effective than fixed ability grouping based on overall performance.

Use a simple grouping template like this to organize your next class period:

| Group | Who’s in It | What They Need | How You’ll Address It |

|---|---|---|---|

| Extend | Students who demonstrated full understanding | Deeper application | Independent challenge task |

| Reteach | Students with partial understanding | Targeted review | Small group with teacher |

| Rebuild | Students missing foundational concept | Prior knowledge repair | Direct reteach, simplified model |

Reteach the specific thing that broke down

When you reteach, don’t repeat the same explanation in the same way. If the original approach didn’t work for a group of students, a word-for-word repeat won’t either. Instead, isolate the specific step or concept that caused confusion and approach it from a different angle. Use a concrete example, a visual model, or a worked example with student input. Keep the reteach short and focused so you don’t overwhelm students with everything at once.

Give feedback that tells students what to do next

Feedback works best when it names the next action, not just the problem. Instead of writing "missing evidence" on a student’s exit ticket, write "find one quote from the text that supports your claim and add it here." That shift turns your feedback into a clear instructional move students can follow without guessing what you want them to fix.

Keep the loop going

Formative assessment only works if you treat it as a continuous cycle rather than a one-time event. You collect evidence, you read the patterns, you act on what you find, and then you check again to see if your adjustment actually worked. That loop is the whole point. Knowing how to use formative assessment data isn’t about running one perfect exit ticket. It’s about building a habit of looking at student work regularly and making small, targeted adjustments that compound over time.

Your next step doesn’t have to be complicated. Pick one lesson next week, design a single formative check around one specific learning target, and decide in advance what you’ll do with each possible result. Run the check, sort the responses, and make one instructional move based on what you see. That’s the whole process. If you want more practical tools and strategies to support your classroom decisions, visit The Cautiously Optimistic Teacher for resources built with real teachers in mind.